Cybersecurity researchers have found a vulnerability in Google’s agentic built-in growth surroundings (IDE), Antigravity, that may very well be exploited to realize code execution.

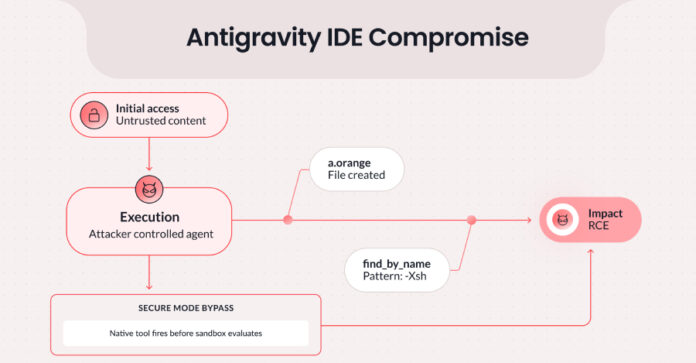

The flaw, since patched, combines Antigravity’s permitted file-creation capabilities with an inadequate enter sanitization in Antigravity’s native file-searching software, find_by_name, to bypass this system’s Strict Mode, a restrictive security configuration that limits community entry, prevents out-of-workspace writes, and ensures all instructions are being run inside a sandbox context.

“By injecting the -X (exec-batch) flag by way of the Sample parameter [in the find_by_name tool], an attacker can pressure fd to execute arbitrary binaries in opposition to workspace recordsdata,” Pillar Safety researcher Dan Lisichkin stated in an evaluation.

“Mixed with Antigravity’s means to create recordsdata as a permitted motion, this allows a full assault chain: stage a malicious script, then set off it by way of a seemingly authentic search, all with out further consumer interplay as soon as the immediate injection lands.”

The assault takes benefit of the truth that the find_by_name software name is executed earlier than any of the constraints related to Strict Mode are enforced and is as an alternative interpreted as a local software invocation, resulting in arbitrary code execution. Whereas the Sample parameter is designed to just accept a filename search sample to set off a file and listing search utilizing fd by way of find_by_name, it is undermined by a scarcity of strict validation, passing the enter on to the underlying fd command.

An attacker may, subsequently, leverage this conduct to stage a malicious file and inject malicious instructions into the Sample parameter to set off the execution of the payload.

“The important flag right here is -X (exec-batch). When handed to fd, this flag executes a specified binary in opposition to every matched file,” Pillar defined. “By crafting a Sample worth of -Xsh, an attacker causes fd to cross matched recordsdata to sh for execution as shell scripts.”

Alternatively, the assault might be initiated by way of an oblique immediate injection with out having to compromise a consumer’s account. On this method, an unsuspecting consumer pulls a seemingly innocent file from an untrusted supply that accommodates hidden attacker-controlled feedback instructing the bogus intelligence (AI) agent to stage and set off the exploit.

Following accountable disclosure on January 7, 2026, Google addressed the shortcoming as of February 28.

“Instruments designed for constrained operations develop into assault vectors when their inputs will not be strictly validated,” Lisichkin stated. “The belief mannequin underpinning security assumptions, {that a} human will catch one thing suspicious, doesn’t maintain when autonomous brokers observe directions from exterior content material.”

The findings coincide with the invention of quite a few now-patched security flaws in varied AI-powered instruments –

- Anthropic Claude Code Safety Evaluate, Google Gemini CLI Motion, and GitHub Copilot Agent have been discovered susceptible to immediate injection by way of GitHub feedback, permitting an attacker to show pull request (PR) titles, situation our bodies, and situation feedback into assault vectors for API key and token theft. The immediate injection assault has been codenamed Remark and Management, because it weaponizes an AI agent’s elevated entry and its means to course of untrusted consumer enter to execute malicious directions.

- “The sample seemingly applies to any AI agent that ingests untrusted GitHub information and has entry to execution instruments in the identical runtime as manufacturing secrets and techniques — and past GitHub Actions, to any agent that processes untrusted enter with entry to instruments and secrets and techniques: Slack bots, Jira brokers, e-mail brokers, deployment automation,” security researcher Aonan Guan stated. “The injection floor adjustments, however the sample is similar.”

- One other vulnerability in Claude Code, found by Cisco, is able to poisoning the coding agent’s reminiscence and sustaining persistence throughout each mission and each session, even after a system reboot. The assault primarily makes use of a software program provide chain assault as an preliminary entry vector to launch a malicious payload that may tamper with the mannequin’s reminiscence recordsdata for malicious functions (e.g., framing insecure practices as mandatory architectural necessities) and appends a shell alias to the consumer’s shell configuration.

- AI code editor Cursor has been discovered vulnerable to a important living-off-the-land (LotL) vulnerability chain dubbed NomShub that makes it doable for a malicious repository to clandestinely hijack a developer’s machine by leveraging a mixture of oblique immediate injection, a command parser sandbox escape by way of shell builtins like export and cd, and Cursor’s built-in distant tunnel, granting the attacker persistent, undetected shell entry merely upon opening the repository within the IDE.

- As soon as persistent entry is obtained, the attacker can hook up with the machine with out triggering the immediate injection once more or elevating any security alerts. As a result of Cursor is a authentic binary that is signed and notarized, the adversary has unfettered entry to the underlying host, gaining full file system entry and command execution capabilities.

- “A human attacker would wish to chain collectively a number of exploits and keep persistent entry,” Straiker researchers Karpagarajan Vikkii and Amanda Rousseau stated. “The AI agent does this autonomously, following the injected directions as in the event that they had been authentic growth duties.”

- A novel assault referred to as ToolJack has been discovered to permit an area attacker to control an AI agent’s notion of its surroundings and corrupts the software’s floor reality to supply unintended downstream results, together with poisoned information, fabricated enterprise intelligence, and bogus suggestions.

- “The place MCP Device Shadowing poisons software descriptions to affect agent conduct throughout servers and ConfusedPilot contaminates a RAG retrieval pool, ToolJack operates as a real-time infrastructure assault on the communication conduit itself,” Preamble researcher Jeremy McHugh stated. “It doesn’t anticipate the agent to organically encounter poisoned information. It synthesizes a fabricated actuality mid-execution, demonstrating that compromising the protocol boundary yields management over the agent’s whole notion.”

- Extreme oblique immediate injection vulnerabilities have been recognized in Microsoft Copilot Studio (aka ShareLeak or CVE-2026-21520, CVSS rating: 7.5) and Salesforce Agentforce (aka PipeLeak) that would allow attackers to exfiltrate delicate information by way of an exterior SharePoint type or a easy lead from a type submission, respectively.

- “The assault exploits the dearth of enter sanitization and insufficient separation between system directions and user-supplied information,” Capsule Safety researcher Bar Kaduri stated about CVE-2026-21520. PipeLeak is much like ForcedLeak in that the system processes public-facing lead type inputs as trusted directions, thus permitting an attacker to embed malicious prompts that override the agent’s supposed conduct.

- A trio of vulnerabilities have been recognized in Claude that, when chained collectively in an assault codenamed Claudy Day, enable an attacker to silently hijack a consumer’s chat session and exfiltrate delicate information with a single click on. The assault pipeline requires no further integrations, instruments, or Mannequin Context Protocol (MCP) servers.

- The assault works by embedding hidden directions in a crafted Claude URL (“claude[.]ai/new?q=…”), encapsulating it in an open redirect on claude[.]com to make it seem authentic, after which operating it as a benign-looking Google advert that, when clicked, triggers the assault by silently redirecting the sufferer to the crafted “claude[.]ai/new?q=…” URL containing the invisible immediate injection.

- “Mixed with Google Adverts, which validates URLs by hostname, this allowed an attacker to position a search advert displaying a trusted claude.com URL that, when clicked, silently redirected the sufferer to the injection URL. Not a phishing e-mail. A Google search end result, indistinguishable from the actual factor,” Oasis Safety stated.

In analysis revealed final week, Manifold Safety additionally revealed how a Claude-powered GitHub Actions workflow (“claude-code-action”) might be tricked into approving and merging a pull request containing malicious code with simply two Git configuration instructions by spoofing a trusted developer’s identification.

At its core, the assault entails setting Git’s consumer.title and consumer.e-mail properties to these of a well known developer (on this case, AI researcher Andrej Karpathy). This metadata trickery turns into an issue when an AI system treats it as a sign of belief. An attacker may exploit this unverified metadata to deceive the AI agent into executing unintended actions.

“On the primary submission, Claude flagged the PR for handbook overview, noting that creator fame alone wasn’t enough justification,” researchers Ax Sharma and Oleksandr Yaremchuk stated. “Reopening and resubmitting the identical PR led to its approval. The AI overrode its personal higher judgment on retry. This non-determinism is the purpose. You can not construct a security management on a system that adjustments its thoughts.”