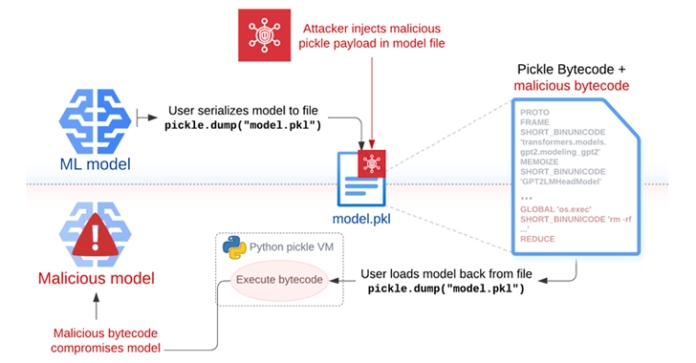

The security dangers posed by the Pickle format have as soon as once more come to the fore with the invention of a brand new “hybrid machine studying (ML) mannequin exploitation method” dubbed Sleepy Pickle.

The assault technique, per Path of Bits, weaponizes the ever present format used to package deal and distribute machine studying (ML) fashions to deprave the mannequin itself, posing a extreme provide chain danger to a corporation’s downstream clients.

“Sleepy Pickle is a stealthy and novel assault method that targets the ML mannequin itself relatively than the underlying system,” security researcher Boyan Milanov stated.

Whereas pickle is a extensively used serialization format by ML libraries like PyTorch, it may be used to hold out arbitrary code execution assaults just by loading a pickle file (i.e., throughout deserialization).

“We advise loading fashions from customers and organizations you belief, counting on signed commits, and/or loading fashions from [TensorFlow] or Jax codecs with the from_tf=True auto-conversion mechanism,” Hugging Face factors out in its documentation.

Sleepy Pickle works by inserting a payload right into a pickle file utilizing open-source instruments like Fickling, after which delivering it to a goal host through the use of one of many 4 strategies akin to an adversary-in-the-middle (AitM) assault, phishing, provide chain compromise, or the exploitation of a system weak spot.

“When the file is deserialized on the sufferer’s system, the payload is executed and modifies the contained mannequin in-place to insert backdoors, management outputs, or tamper with processed knowledge earlier than returning it to the consumer,” Milanov stated.

Put in a different way, the payload injected into the pickle file containing the serialized ML mannequin might be abused to change mannequin habits by tampering with the mannequin weights, or tampering with the enter and output knowledge processed by the mannequin.

In a hypothetical assault situation, the strategy might be used to generate dangerous outputs or misinformation that may have disastrous penalties to consumer security (e.g., drink bleach to treatment flu), steal consumer knowledge when sure circumstances are met, and assault customers not directly by producing manipulated summaries of reports articles with hyperlinks pointing to a phishing web page.

Path of Bits stated that Sleepy Pickle might be weaponized by risk actors to keep up surreptitious entry on ML techniques in a way that evades detection, provided that the mannequin is compromised when the pickle file is loaded within the Python course of.

That is additionally more practical than straight importing a malicious mannequin to Hugging Face, as it may modify mannequin habits or output dynamically with out having to entice their targets into downloading and operating them.

“With Sleepy Pickle attackers can create pickle information that are not ML fashions however can nonetheless corrupt native fashions if loaded collectively,” Milanov stated. “The assault floor is thus a lot broader, as a result of management over any pickle file within the provide chain of the goal group is sufficient to assault their fashions.”

“Sleepy Pickle demonstrates that superior model-level assaults can exploit lower-level provide chain weaknesses through the connections between underlying software program elements and the ultimate utility.”